DAFNI Hardware at the University College London – CASA

CASA UCL

Richard Milton, Senior Research Associate, CASA, UCL

The Centre for Advanced Spatial Analysis: CASA’s focus is to be at the forefront of what is one of the grand challenges of 21st century science: to build a science of cities from a multidisciplinary base, drawing on cutting edge methods, and ideas in modelling, complexity, visualisation and computation.

Our current mix of geographers, mathematicians, physicists, architects, planners and computer scientists make CASA a unique institute within UCL. Our vision is to be central to this new science, the science of smart cities, and relate it to city planning, policy and architecture in its widest sense.

We pitched for the DAFNI Hardware Fund monies in order to purchase a number of servers and high performance computers as well as headsets and GPU hardware to take research in projects involving large quantities of data and model runs to the next level.

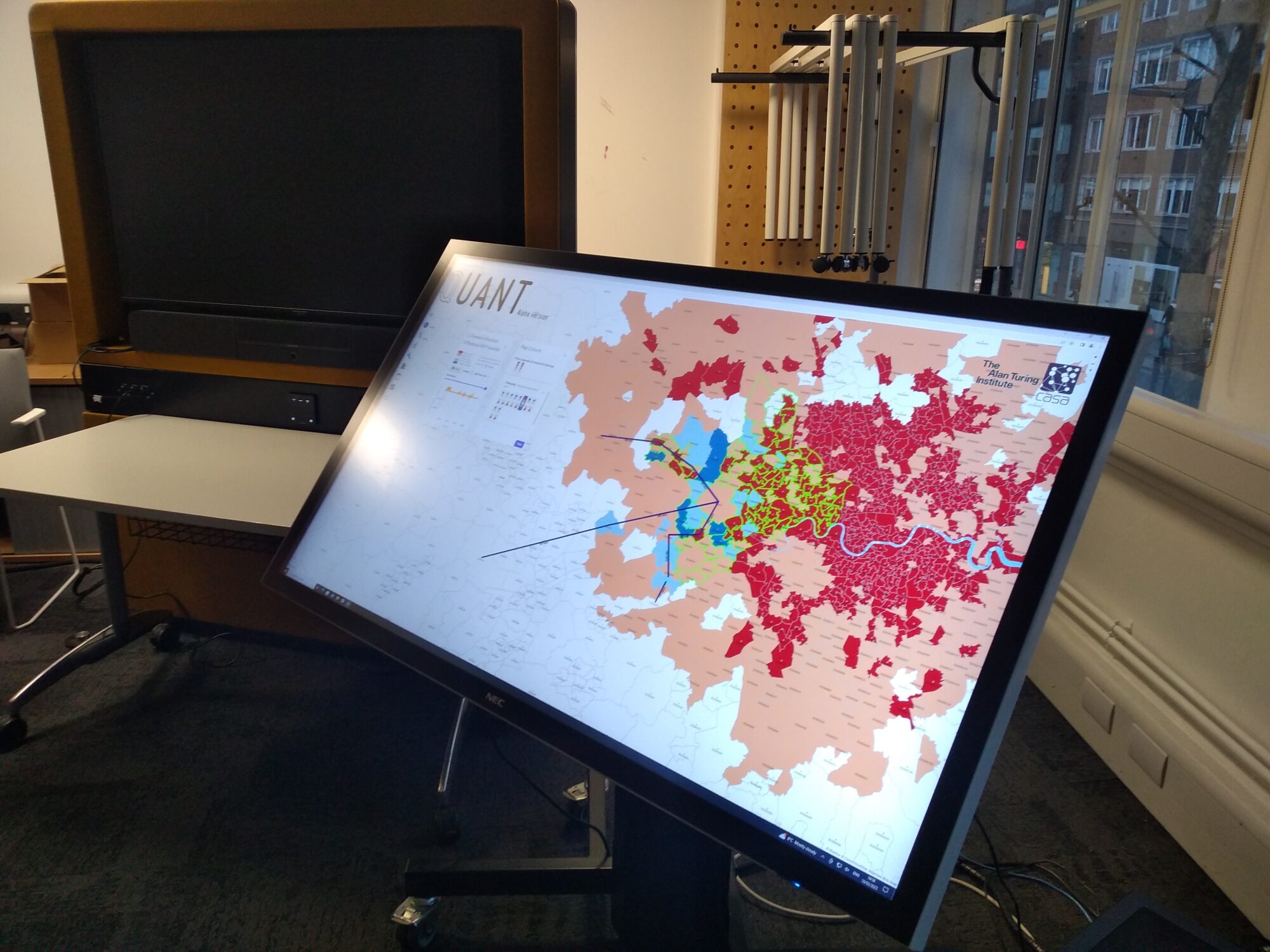

One project where we were immediately able to put the new hardware to good use is the QUANT project, which is funded by the Alan Turing Institute. This is a website which allows the general public to investigate the effects on residential location of changing employment and transport in the UK.

Typical QUANT scenarios involve predicting residential demand following the construction of a 3rd runway at Heathrow, or the effect on travel time of a project like High Speed 2 or Crossrail.

Having our own hardware through the DAFNI Hardware Fund means that we can build our own twin of a DAFNI node using Docker and Kubernetes and host QUANT publicly so that anybody can use it online.

With a web server containing three NVIDIA A40 GPUs, this can be used to accelerate the gravity modelling equations that underpin how QUANT’s scenario calculations work.

The model has 8,436 zones at MSOA level for England, Scotland and Wales, using three modes of transport: road, bus and rail. The flow matrices and cost matrices contain 8436×8436 values each for the three modes, so a single model run of QUANT requires approximately 214 million computations of origin, destination and cost.

These calculations are parallel in nature, not requiring any dependencies from previous calculations, so the speedup we achieve by using the parallel GPU threads is significant.

This is a step change in performance from previous versions of the QUANT website, which now allows us to do more with the hardware provided by DAFNI.

Research boosted by new hardware

We have split the two servers between operational and research tasks, so that the production version of the QUANT website runs on the stable operational server, leaving the other free for research.

This gives researchers an environment based on Docker and Kubernetes that is close to the real DAFNI hardware at RAL, but is more flexible in terms of software development and debugging.

QUANT is a website accessible to the general public, but interaction with the model and visualisation of the model outputs are also improved by having everything localised.

Virtual Reality visualisation of model outputs is now possible using the VR goggles – previously we were using ESRI CityEngine or Unity to view results on a flatscreen monitor before. The VR hardware opens up a new avenue of research into how this type of complex city modelling data is visualised that was not possible before.

Reducing run times to seconds

The new version of QUANT allows for road and bus scenarios, where only rail scenarios were possible before. This is a speed issue as changing the transport network means re-running a shortest paths calculation for all possible routes. The term for this is “all pairs shortest path”, or APSP, where 8436×8436 possible node to node pairs makes for 71 million possible shortest paths.

The rail only has about 3,100 nodes (stations) in the network, where the road network has 3.5 million, which is why rail takes about two minutes and the road takes 2.5 hours to calculate using the GPU.

In order to make this interactive, all the calculations for a scenario need to be performed in under about 30 seconds, so we use an approximate method for the shortest paths which gives us the interactivity that we need for the website. In order to prove that this optimisation works, though, we need to check it against the exact APSP algorithm, which is something that the GPU hardware lets us do offline, while also accelerating the approximate algorithm that a user to the website sees.

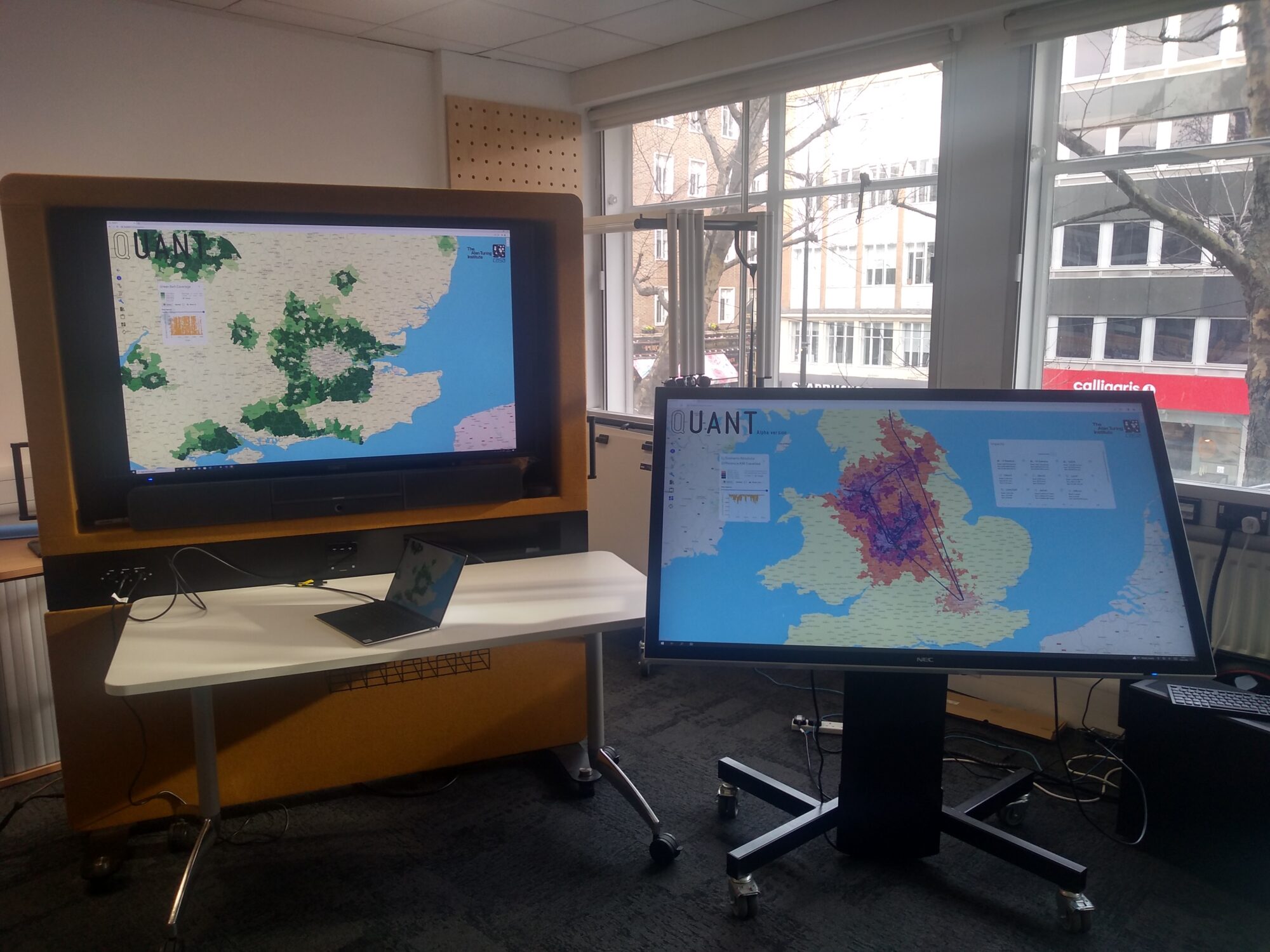

In addition to the website, we also have a touch table which was funded by the Alan Turing Institute. One of the high end visualisation PCs that were purchased through the DAFNI Hardware Fund is used to run the touch table, which includes an NVIDIA A5000 GPU similar to the server GPUs. This architecture means that we can run the QUANT website on the table locally, giving a degree of interactivity for users that we didn’t have before.

The plan moving forward is to run scenario planning sessions using the touch table with groups of volunteers to explore how the QUANT model can be used in real planning scenarios.

Next steps: further visualisation and AI model research

We’ve only touched on the visualisation possibilities of VR at the moment, so in the future we’ll be researching using more VR to visualise the effects of the model scenarios in the real world and also visualisation of how the model works internally.

A lot of the data we use is inherently multi-dimensional, so visualisation inside the model space of the input parameters is a possible line of research.

Also, the TensorFlow cores contained in the NVIDIA A40 GPUs are designed for running Artificial Intelligence training or inference workloads. We have previously published some work on AI gravity models, but with this new hardware, we can now run large numbers of scenarios to calculate impact statistics and then use this data to train AI models to produce their own scenarios.

Comparisons of these computer-generated scenarios to real life scenarios like HS2, Crossrail and CaMKOx are interesting new directions of research which were not possible before.

Finally, the AI element can also be used with the touch table to give users hints as to how to optimise their plans.

Links with the DAFNI platform

The new hardware from DAFNI gives us the ability to write and test models on our own platform, before pushing the final working code to the DAFNI platform.

Getting back to DAFNI’s core promise of being able to link published models together, one idea that’s still waiting to be tested is to run a local model on our touch table, then link it to a published DAFNI model via our DAFNI user ID, then bring the DAFNI outputs back to our table for visualisation.

We have a lot of expertise in geographic visualisation systems that is still to be fully exploited through the DAFNI platform.

- Hardware spec

2xPowerEdge R740XD, 2×2 Xeon 6230 21.GHZ, 2x4x1.2TB 10K drives (2x12x16GB)=384 GB RAM, 2×3 NVIDIA A40 GPU (48GB)

2xDell N1108T-ON 8 Port 1GB Switches

12×1.2TB 10K HDD

7xSCAN Fractal Series Gaming PCs, Intel Core i9-11900K, 128GB 3200MHZ DDR4 RAM, 2TB HDD, 2TB NVMe SSD, NVIDIA RTX A5000 GPU

3xHTC VIVE Pro Eye V2 VR Headsets

3xHTC VIVE COSMOS ELITE VR Headsets

1xValve Index VR Headset

Dell 75 inch screen